Introduction

Selecting the right analytical engine is not about choosing a “better” tool, but about aligning technology with the scale, workload patterns, and architectural goals of your system. At the same time, for many organizations—especially small and mid-sized enterprises (SMEs)—this decision is increasingly influenced by the need to control costs, avoid vendor lock-in, and maintain architectural flexibility.

In recent years, proprietary cloud data warehouses have made it easier to get started with analytics, but they often come with escalating costs tied to data volume, compute usage, and concurrency. As data grows and usage scales, these platforms can become financially unsustainable, particularly for organizations looking to build long-term, production-grade analytics capabilities.

This is where open-source, self-hosted analytical engines like DuckDB and ClickHouse provide a compelling alternative. Both technologies offer high-performance analytics without the licensing overhead, enabling teams to design cost-efficient data platforms that scale on their own terms. With full control over infrastructure and deployment, organizations can optimize resource usage, tailor performance to their needs, and build systems that are both economically sustainable and technically robust.

While both DuckDB and ClickHouse are production-capable systems, they serve different layers of the analytics stack. Understanding how to position them effectively allows organizations not only to meet performance and scalability requirements, but also to significantly reduce total cost of ownership (TCO) compared to traditional proprietary solutions

A modern data architecture can benefit significantly from using them together in a complementary way, rather than treating them as competitors. For a quick reference, refer to this section, “Choosing ClickHouse & DuckDB for Production-Grade Data Infrastructure,” or follow along with the article.

In the modern world of high-performance data systems, simply being fast is no longer sufficient. As organizations scale, the expectations from analytics platforms evolve from basic reporting to real-time, interactive, and highly reliable decision-making systems. To meet these demands, a truly enterprise-grade analytics architecture must effectively handle the three critical dimensions of big data—the Volume, Velocity, and Variety of incoming data—without compromising on stability, scalability, or performance.

However, scaling analytics is not just about storing more data or running faster queries—it introduces a fundamental challenge often referred to as the “Performance Wall.” This is the critical tipping point where traditional relational databases and legacy analytical systems begin to struggle. As data volumes grow exponentially and concurrent user queries increase, these systems often exhibit noticeable slowdowns, inefficient query execution, increased latency, and in worst cases, system failures due to resource contention.

This performance bottleneck becomes especially evident in real-world production environments where workloads are unpredictable and diverse. Ad-hoc queries, complex joins, aggregations over large datasets, and simultaneous dashboard interactions can quickly overwhelm systems that were not designed for analytical scale. As a result, organizations are forced to rethink their data architecture, moving toward modern analytical engines that are optimized for columnar storage, in-memory processing, and distributed execution.

In this context, technologies like DuckDB and ClickHouse emerge as powerful solutions—each designed to address different layers of the analytics stack. While one focuses on embedded, local, and high-efficiency query processing, the other excels at handling massive-scale, distributed analytical workloads. Understanding how to position these tools effectively is key to overcoming the performance wall and building a scalable, future-ready analytics ecosystem.

DuckDB: The Lightweight Analytical Workhorse

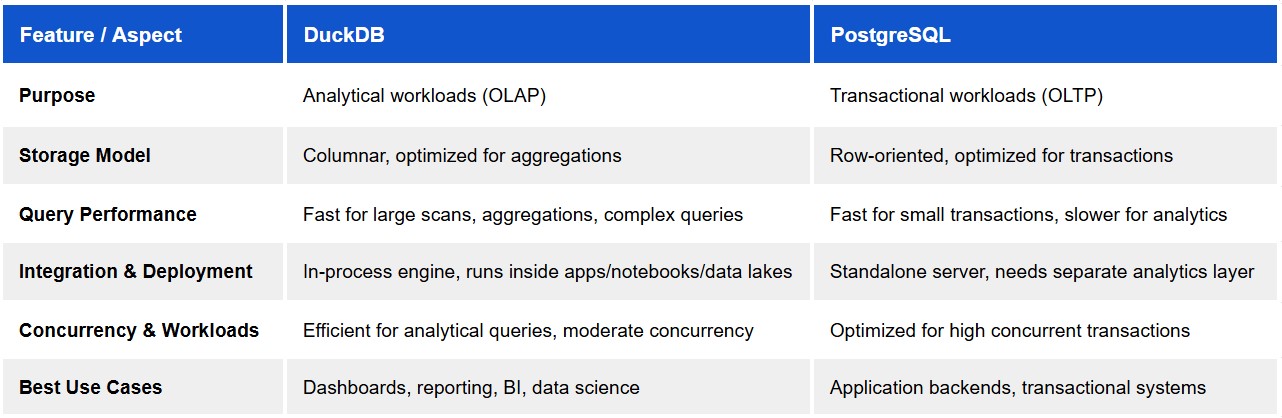

DuckDB is often described as “SQLite for analytics,” and that analogy holds true when viewed through the lens of its architecture and design philosophy. It is an embedded OLAP (Online Analytical Processing) database engineered for efficient analytical workloads on a single node. With a columnar execution engine, vectorized processing, and zero administrative overhead, DuckDB delivers high-performance analytics in a compact and highly efficient footprint.

Beyond its simplicity, DuckDB is a powerful and practical choice for small to medium-scale analytical systems where operational complexity needs to remain low, but performance and reliability are still critical. It fits seamlessly into environments such as departmental analytics platforms, mid-sized applications, internal reporting systems, and embedded analytics within applications where a full distributed data warehouse may be overkill.

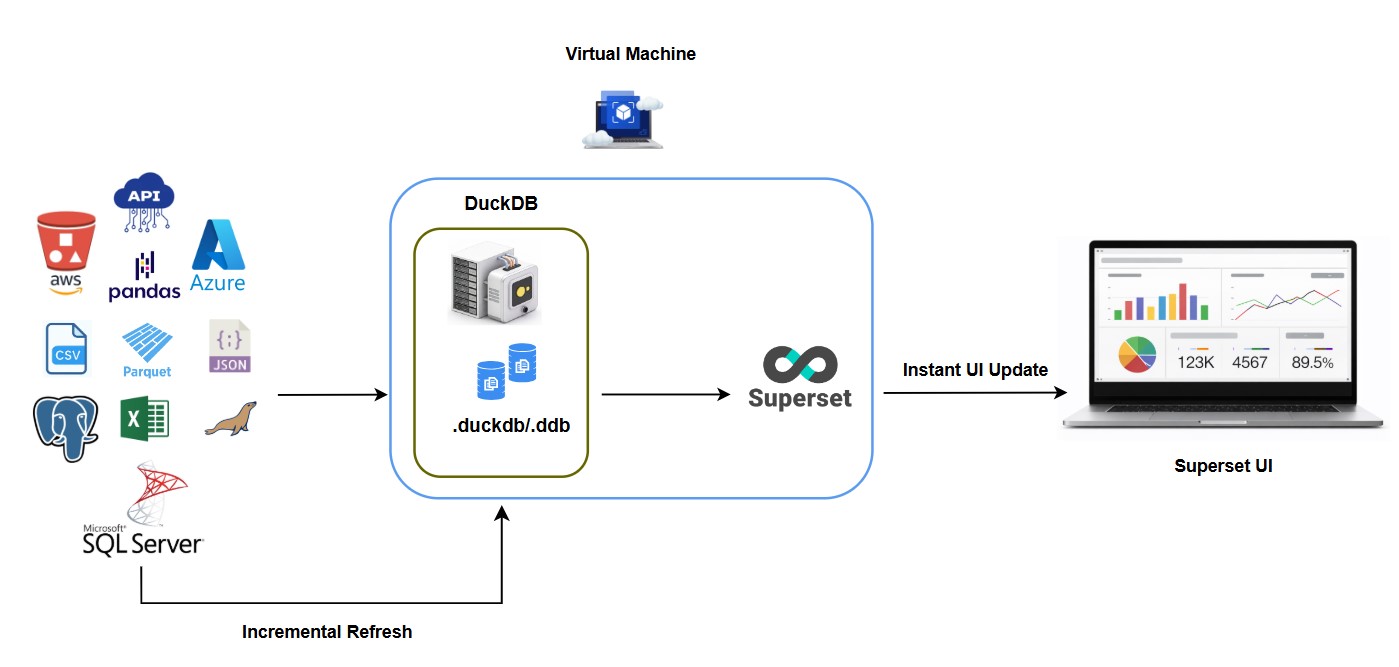

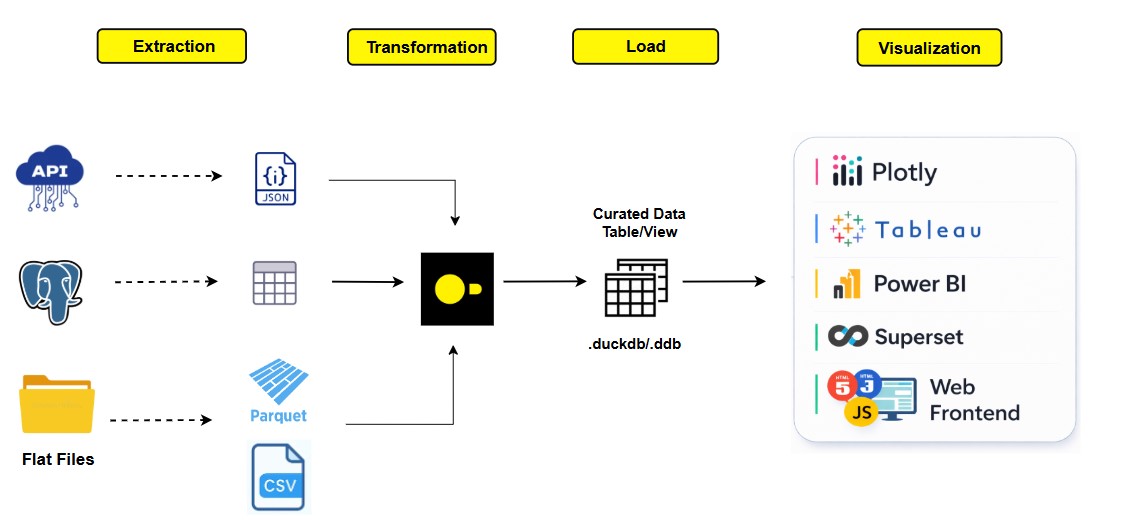

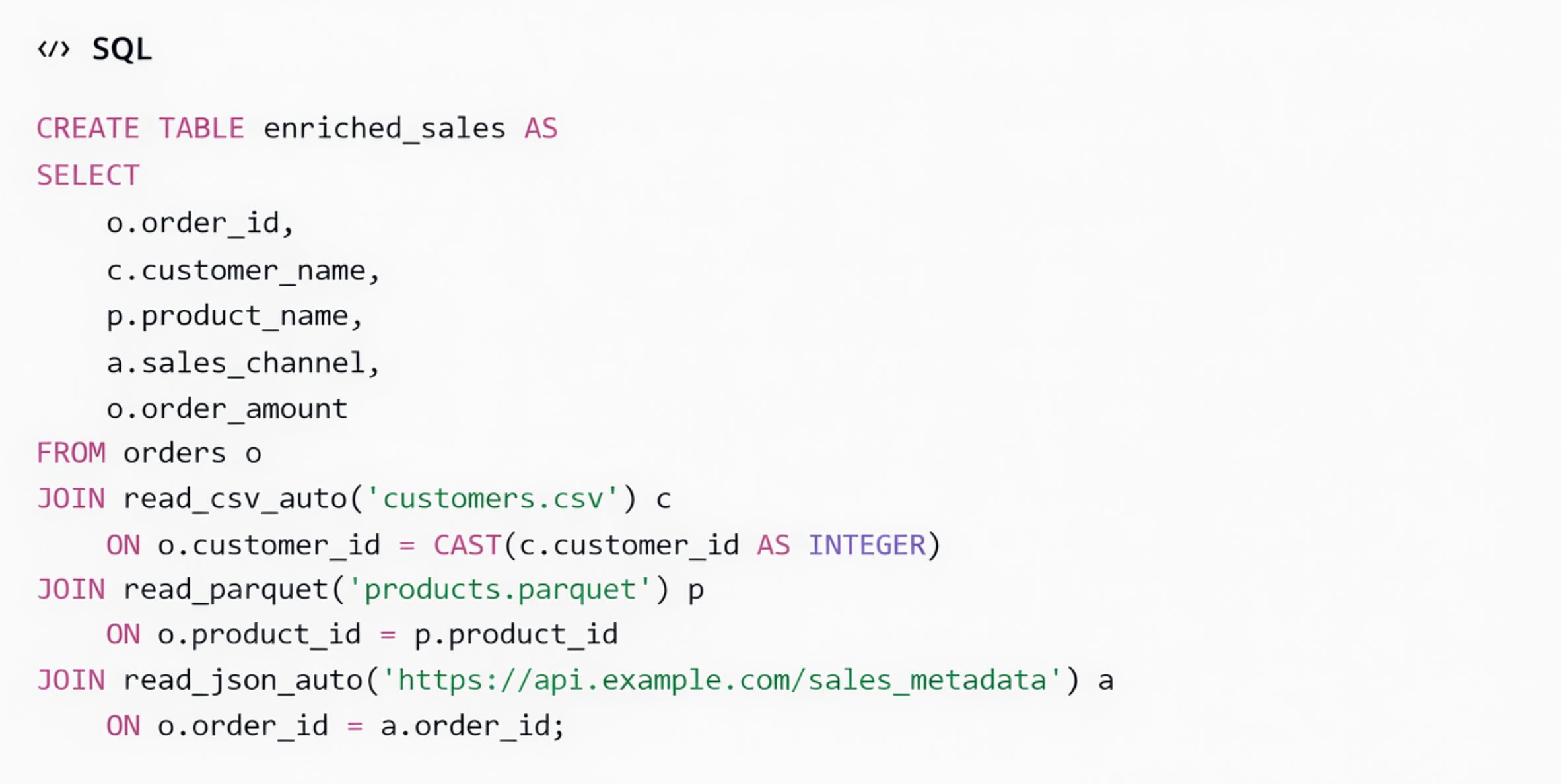

DuckDB supports direct querying over modern data formats such as Parquet, CSV, and JSON, enabling teams to build efficient data pipelines without the need for heavy ETL processes. Its strong integration with ecosystems like Python and R makes it suitable not just for exploration, but also for production-grade analytical workflows, where data transformation, aggregation, and reporting can be executed close to the source of the data. This reduces data movement, simplifies architecture, and improves overall system responsiveness.

In small to medium-scale deployments, DuckDB can effectively serve as:

- A primary analytical engine for applications handling data volumes ranging from a few gigabytes up to several hundred gigabytes, and in many cases even low terabyte-scale datasets, depending on available system memory and workload characteristics

- An embedded analytics layer within data-driven products

- A high-performance query engine for batch processing and scheduled reporting

- A lightweight alternative to traditional OLAP systems for teams that want speed without infrastructure complexity

Its ability to execute complex analytical queries with minimal setup makes it highly valuable in production scenarios where agility, cost-efficiency, and maintainability are key considerations.

However, DuckDB is fundamentally designed around a single-node execution model, and this design defines its operational boundary. As data scales beyond the limits of a single machine or as the system begins to experience **moderate to high levels of concurrent access—such as multiple users or services executing analytical queries simultaneously—**its capabilities reach a natural threshold.

While DuckDB supports concurrent reads and controlled write operations, it is not optimized for high-concurrency, multi-user environments where dozens or hundreds of queries may run in parallel. In such scenarios, resource contention, query queuing, and write serialization can impact performance.

As these demands grow, organizations typically evolve toward distributed, server-based systems that support horizontal scaling, workload isolation, and efficient multi-node query execution.

This positions DuckDB not as a limitation, but as a strategic building block—an efficient, high-performance engine that excels within its domain and complements larger distributed systems when scalability requirements increase.

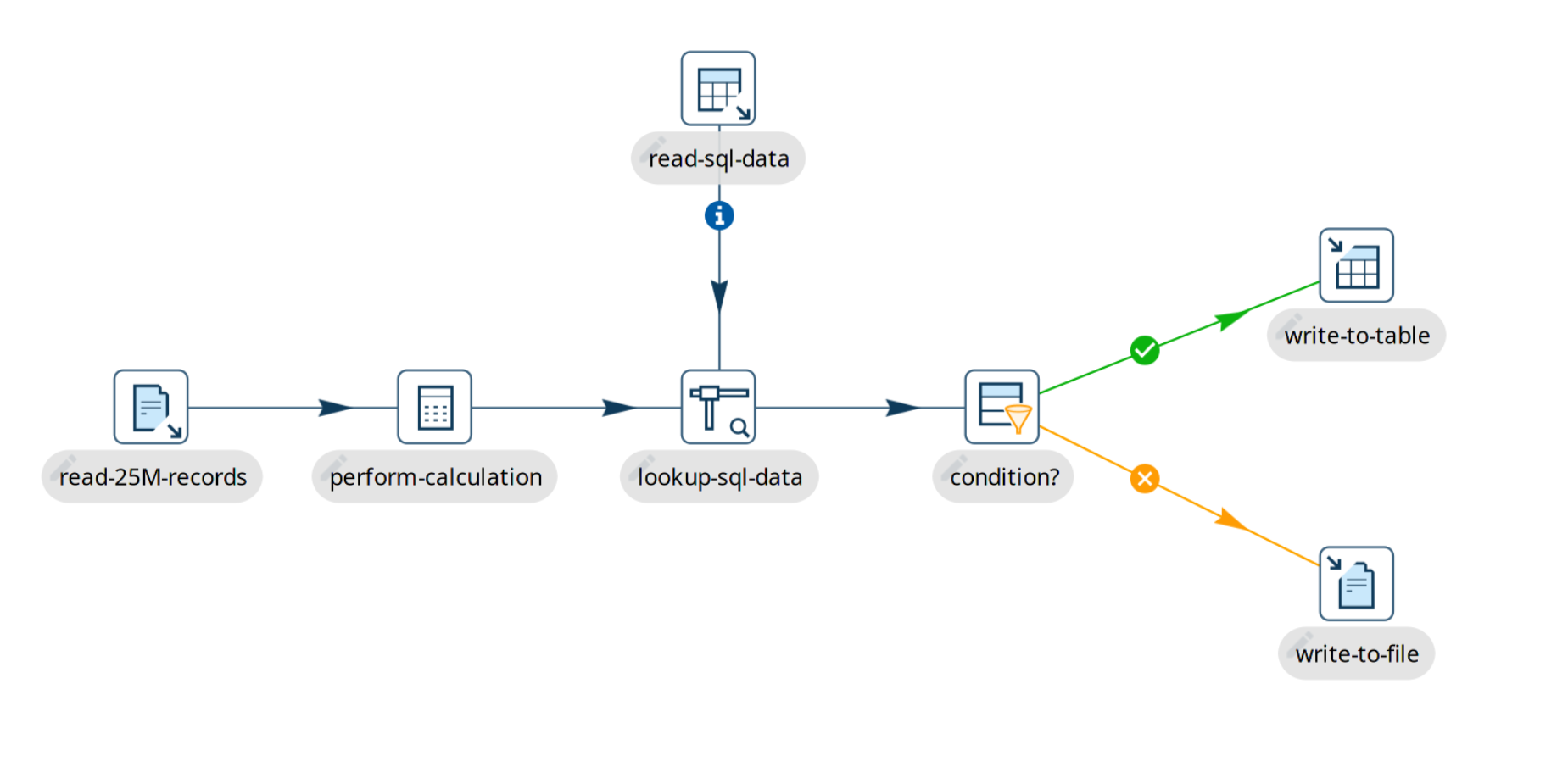

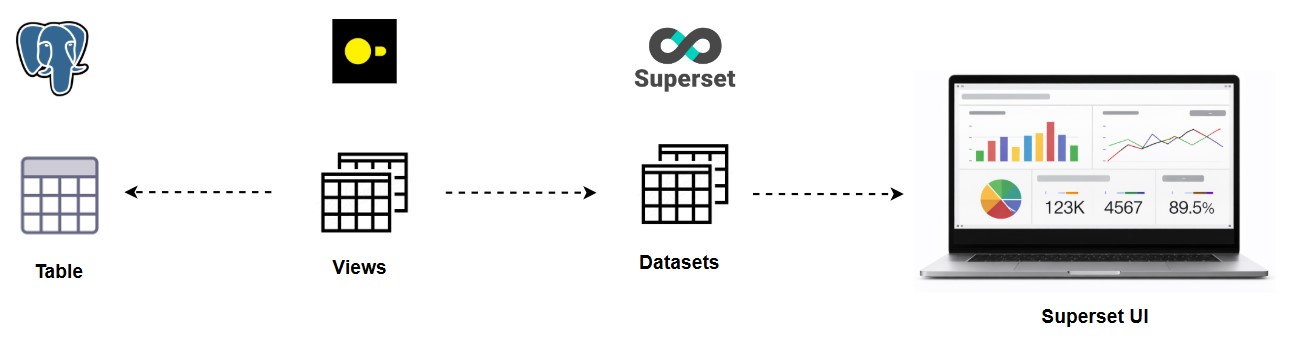

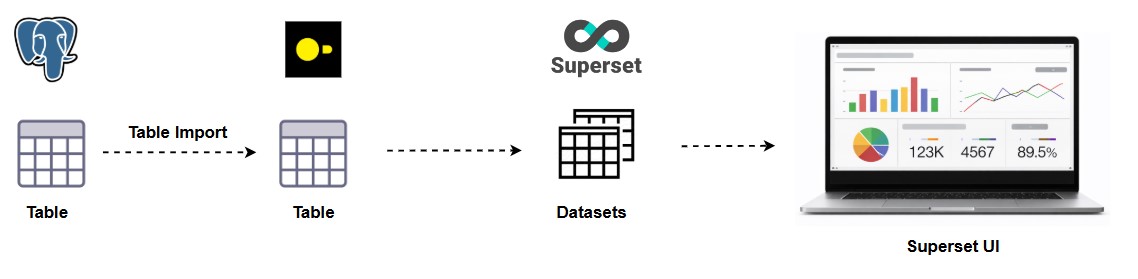

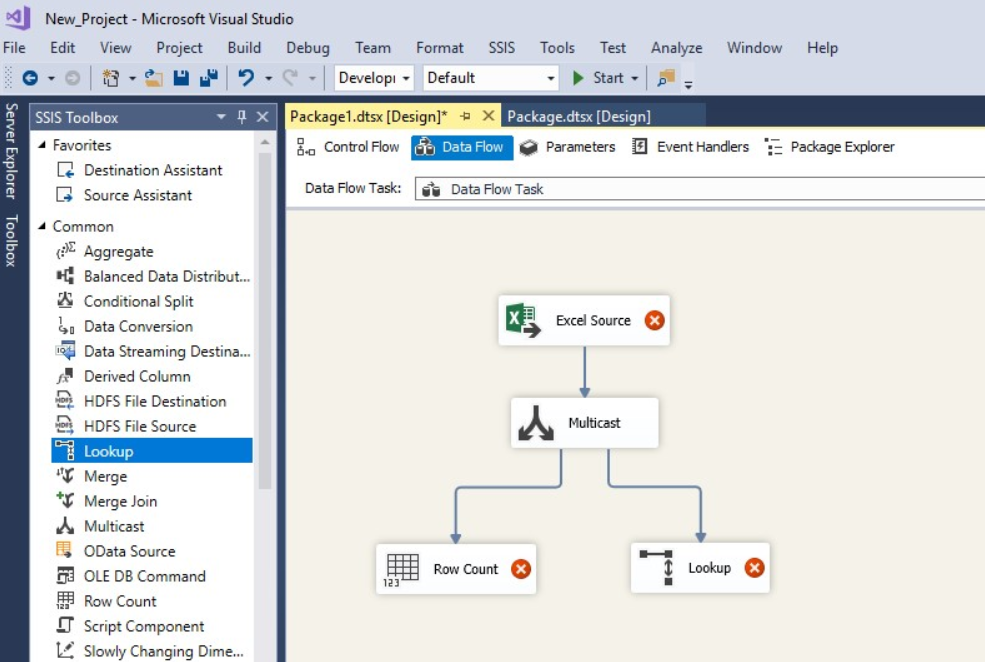

Querying & Analysis

DuckDB’s in-process, columnar execution engine makes it possible to run large analytical queries directly on datasets from multiple sources with minimal infrastructure, making it ideal for powering dashboards and embedded analytics in enterprise applications. For a practical perspective on how DuckDB can accelerate enterprise analytics workflows and simplify architectural complexity, see this overview on DuckDB for enterprise analytics. DuckDB for Enterprise Analytics: Fast Analytics Without Heavy Data Warehouses

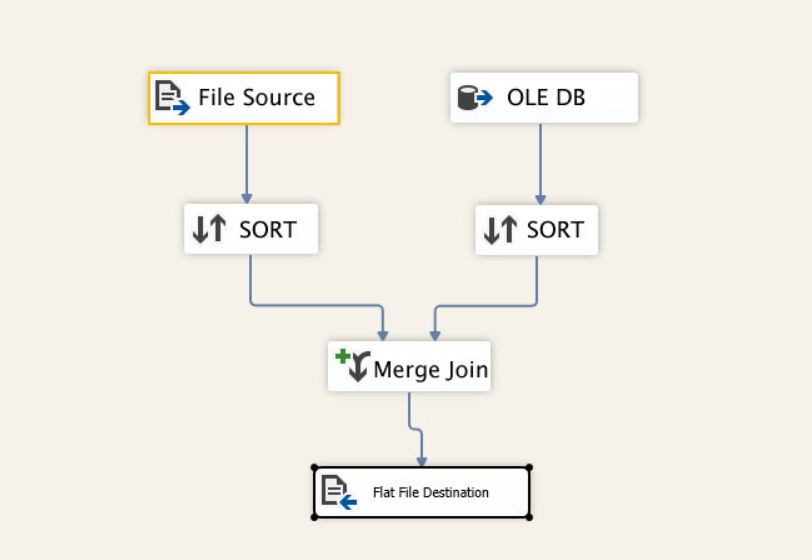

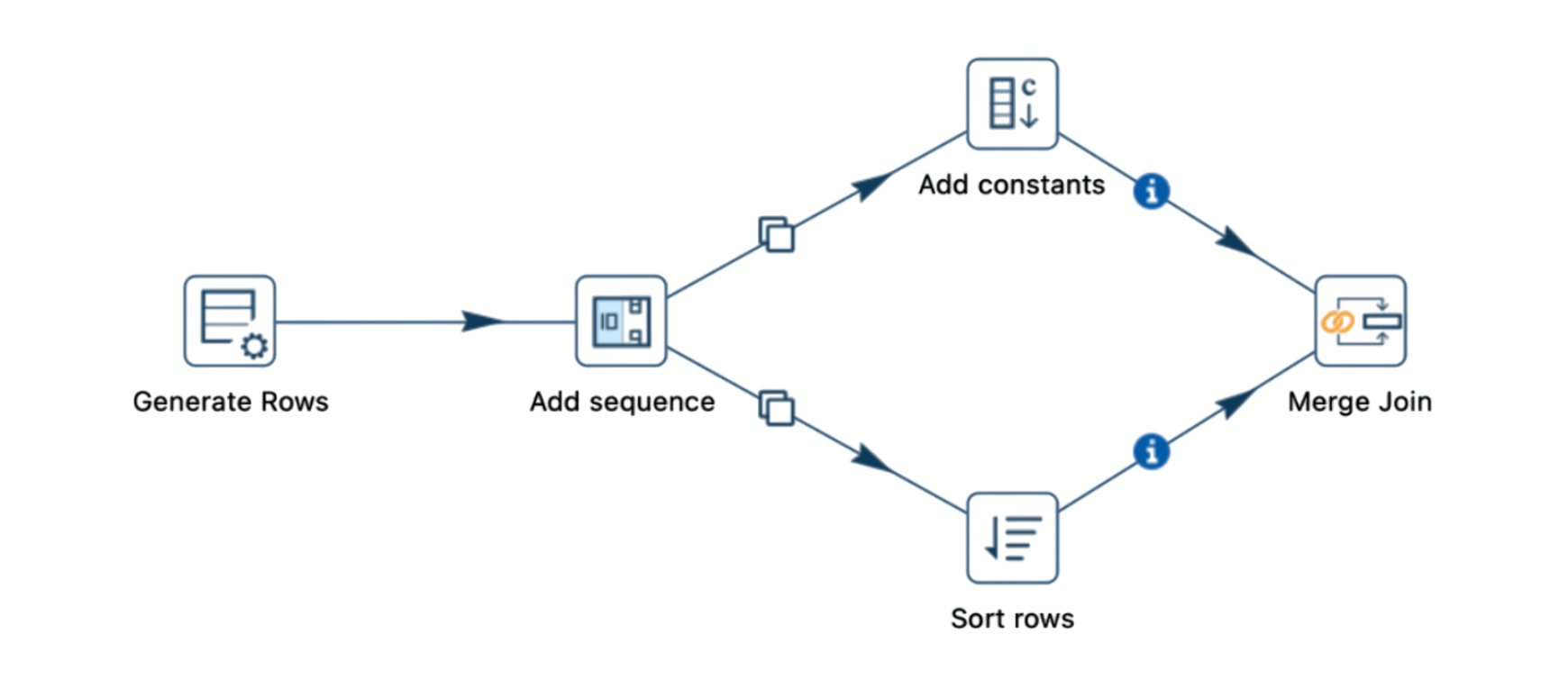

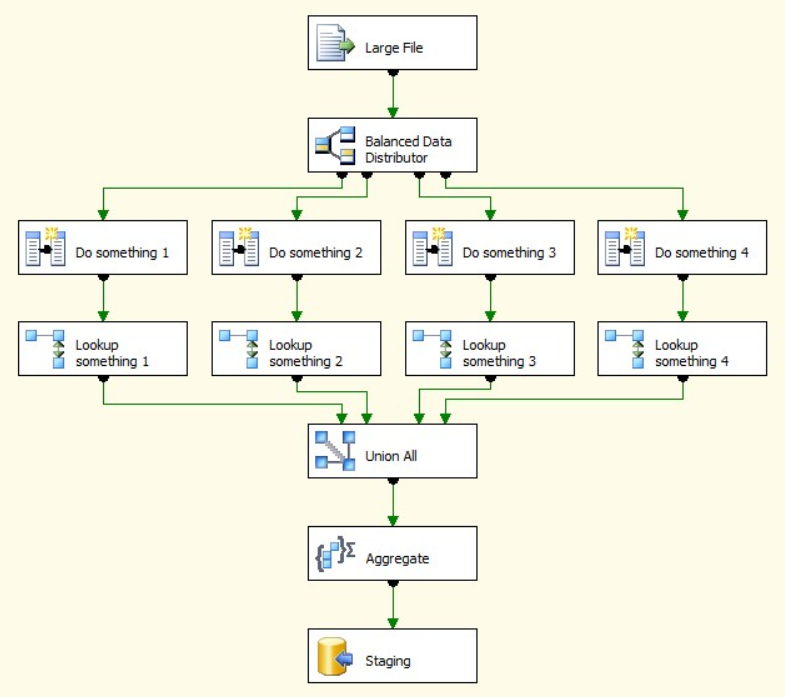

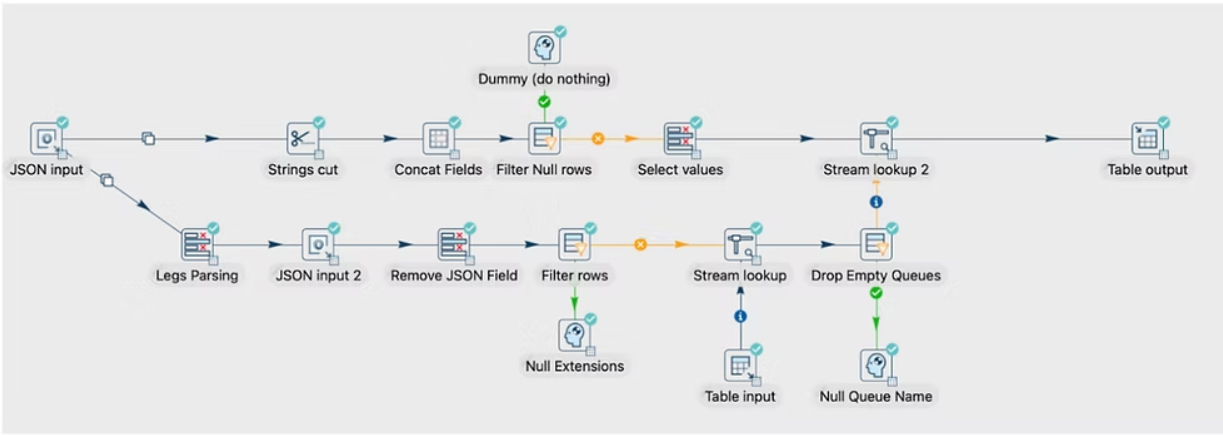

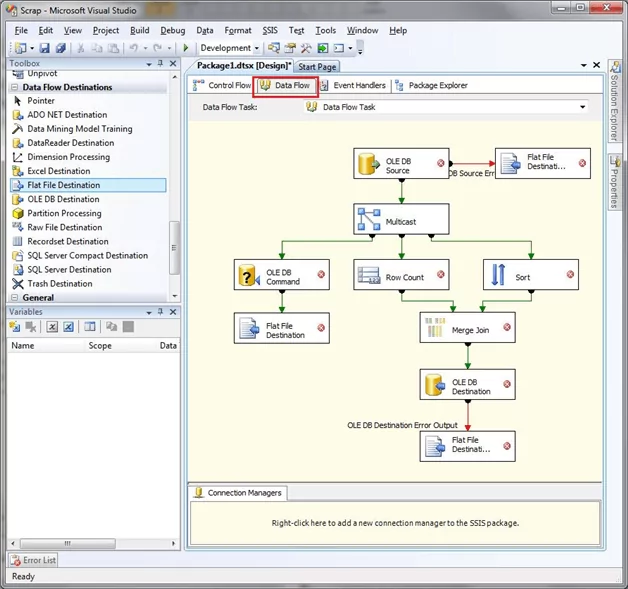

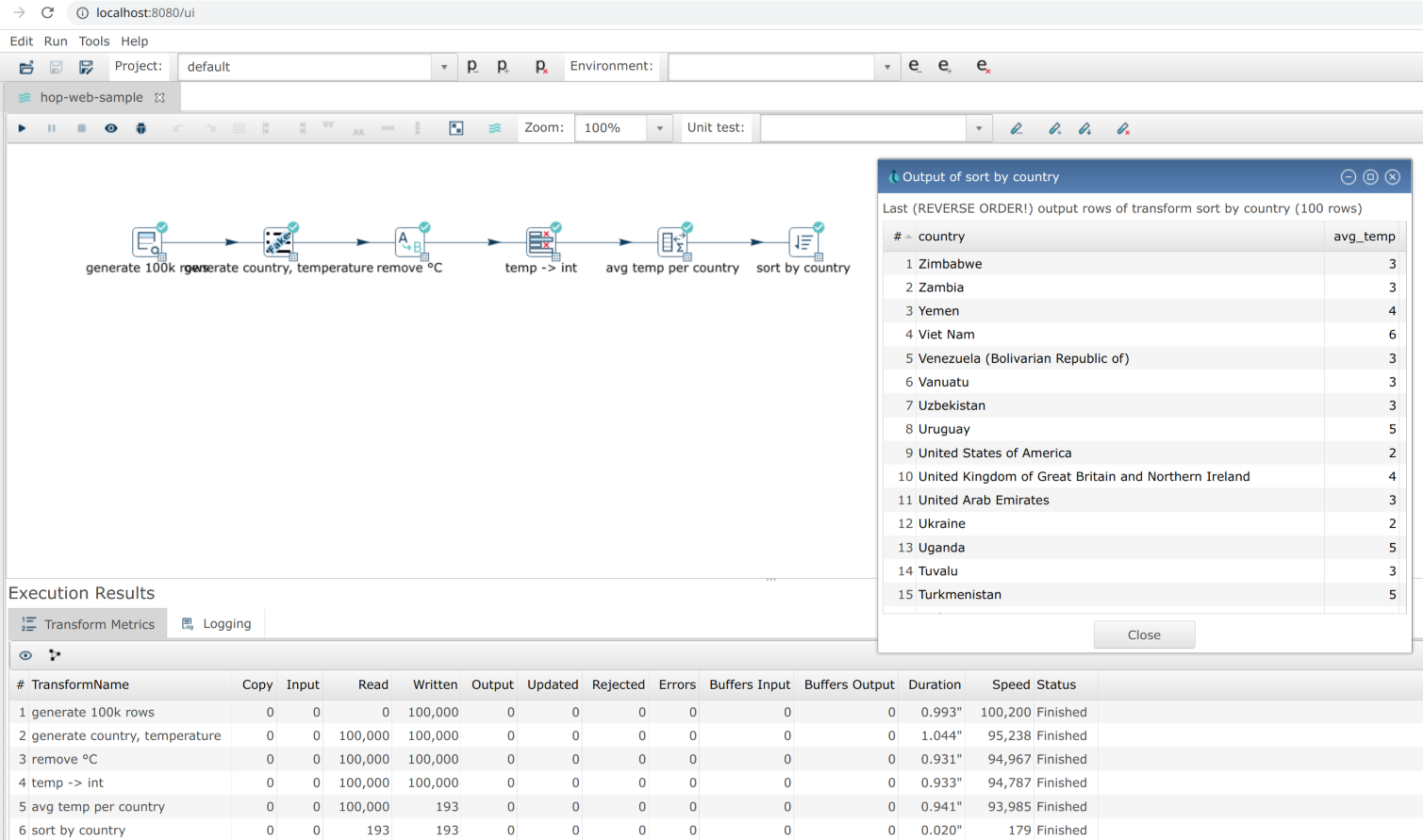

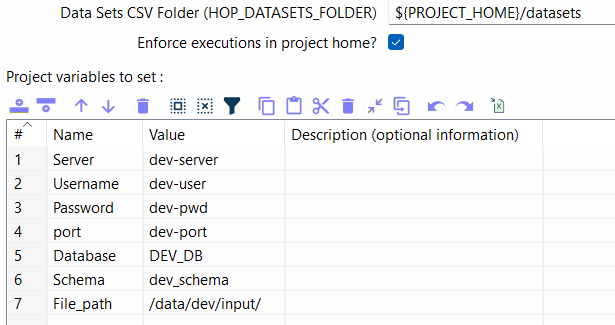

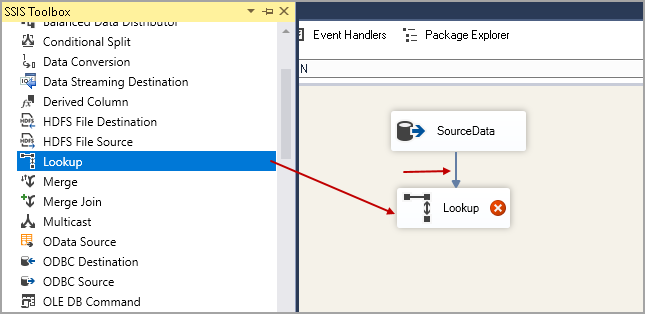

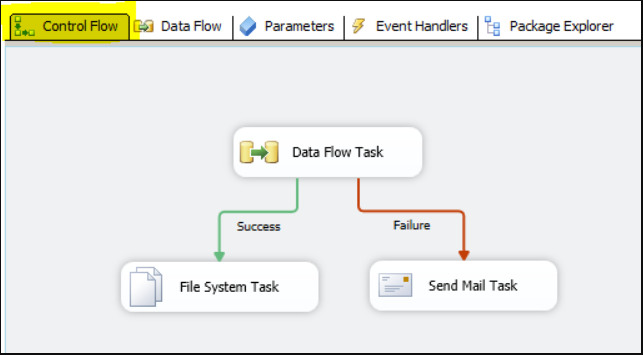

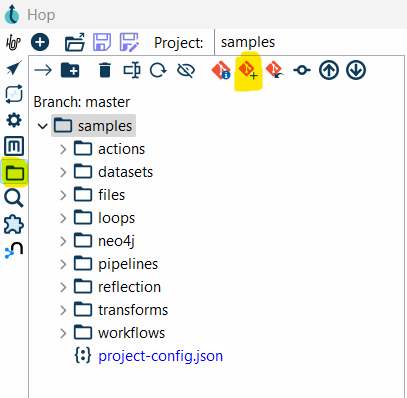

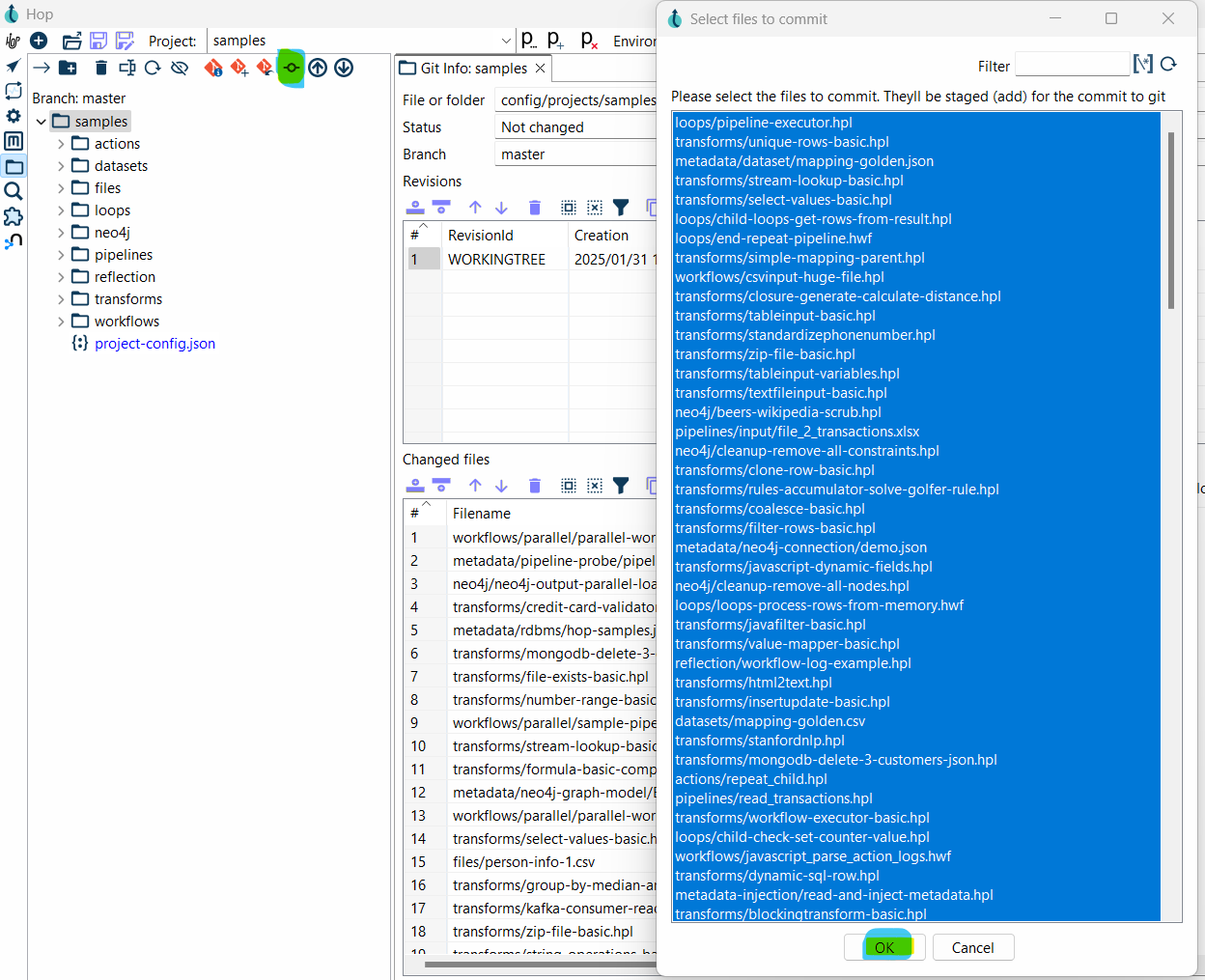

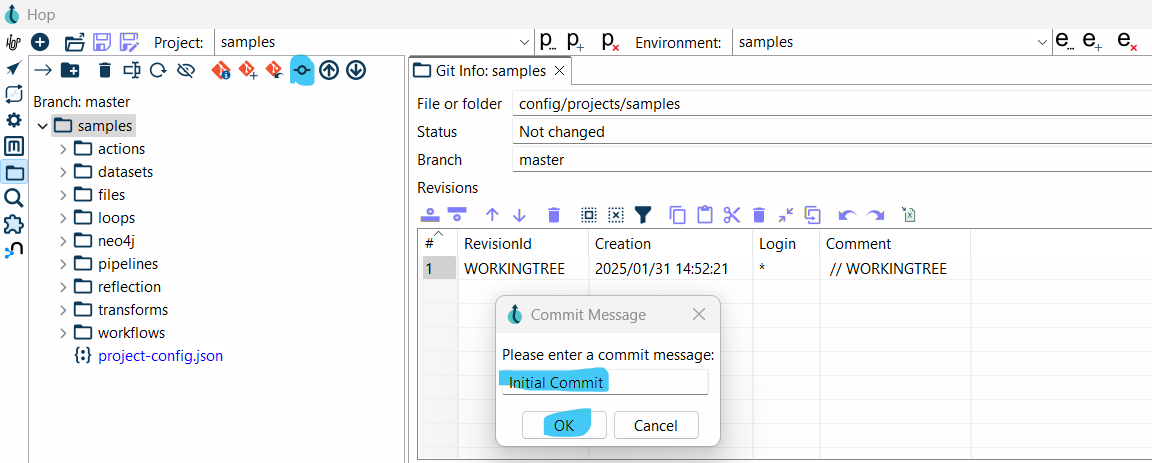

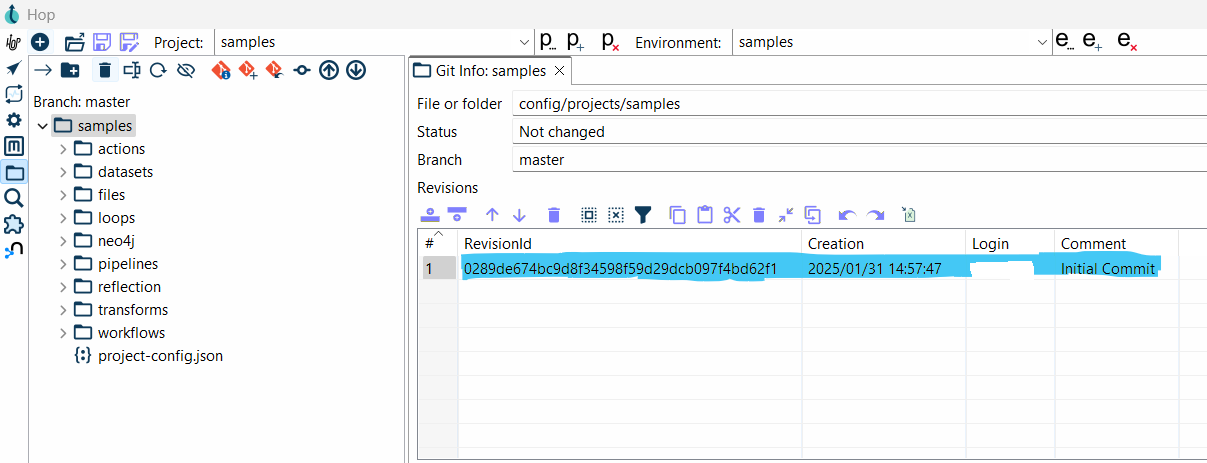

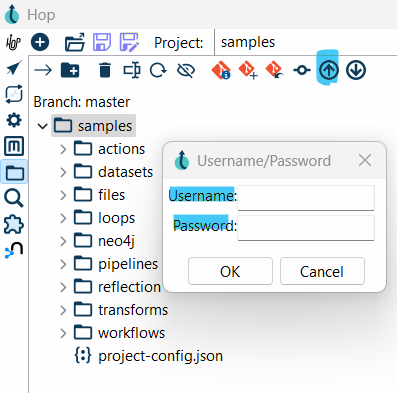

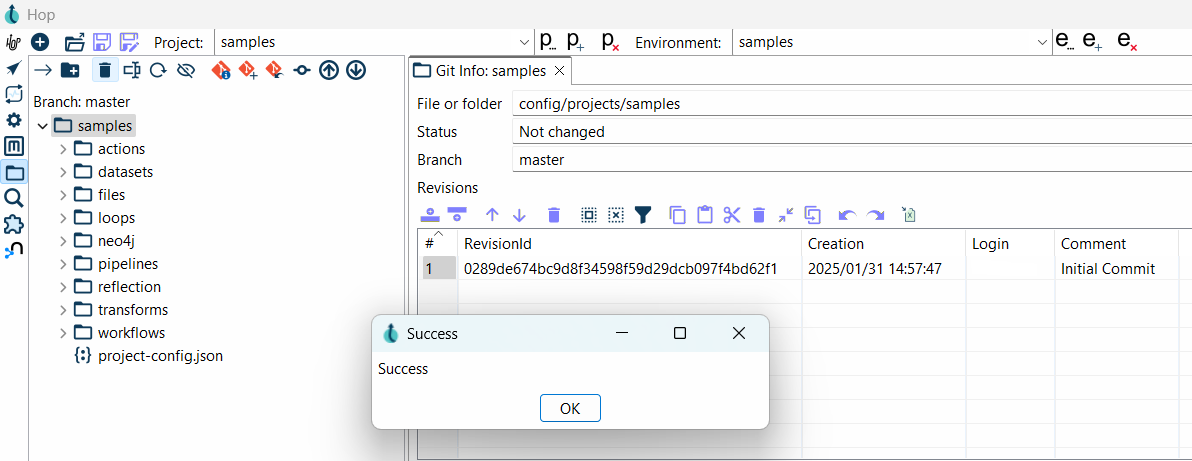

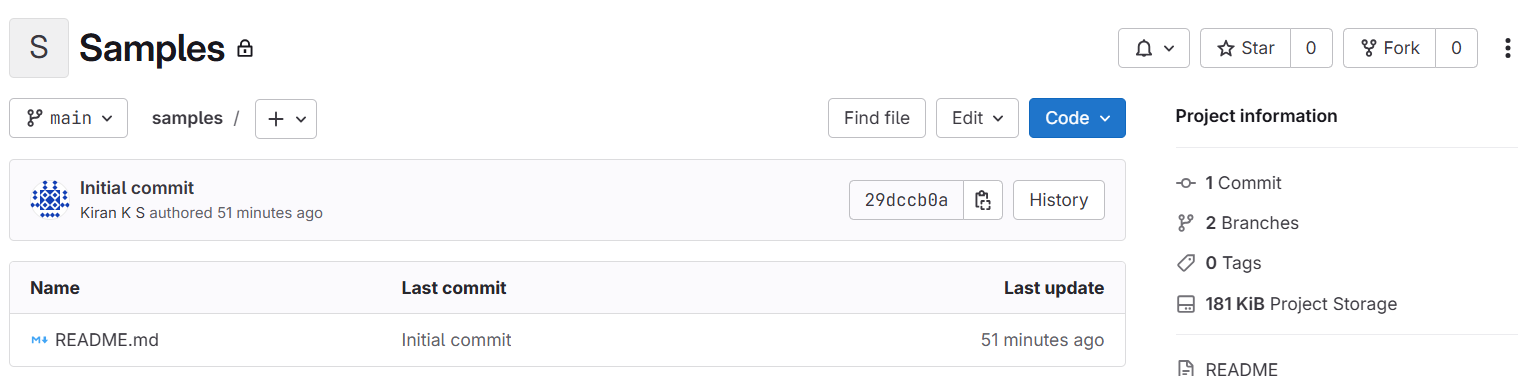

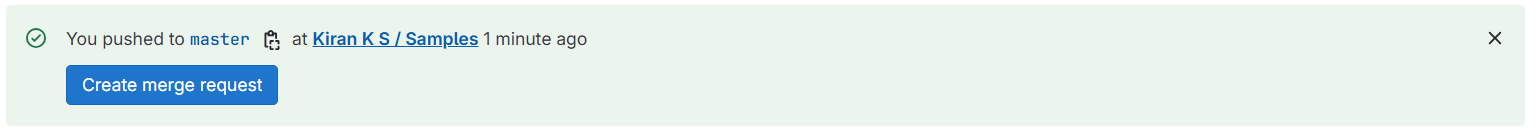

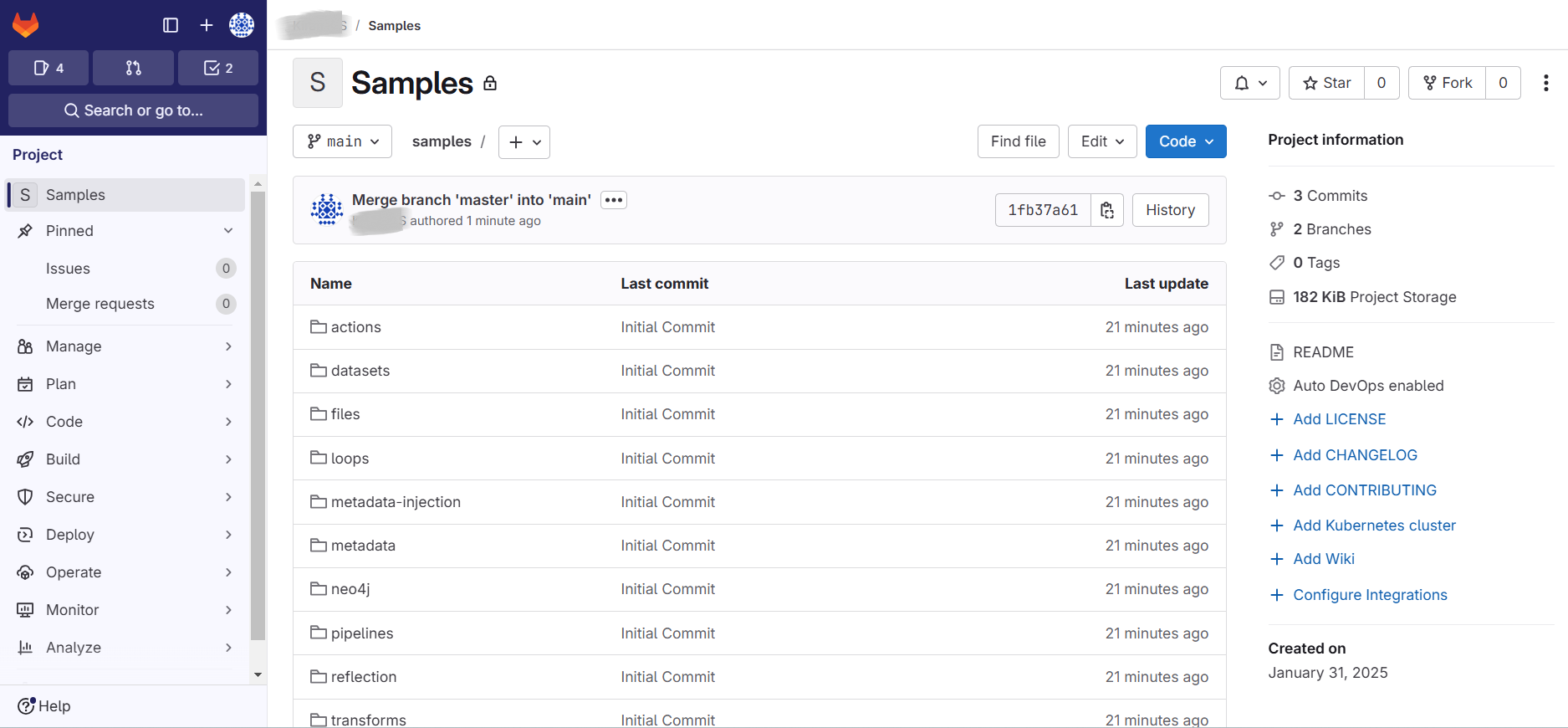

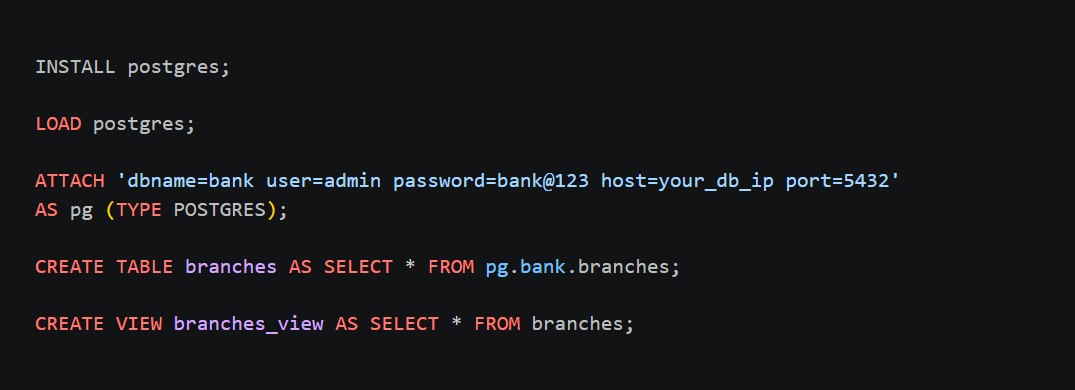

Importing tables from the source database

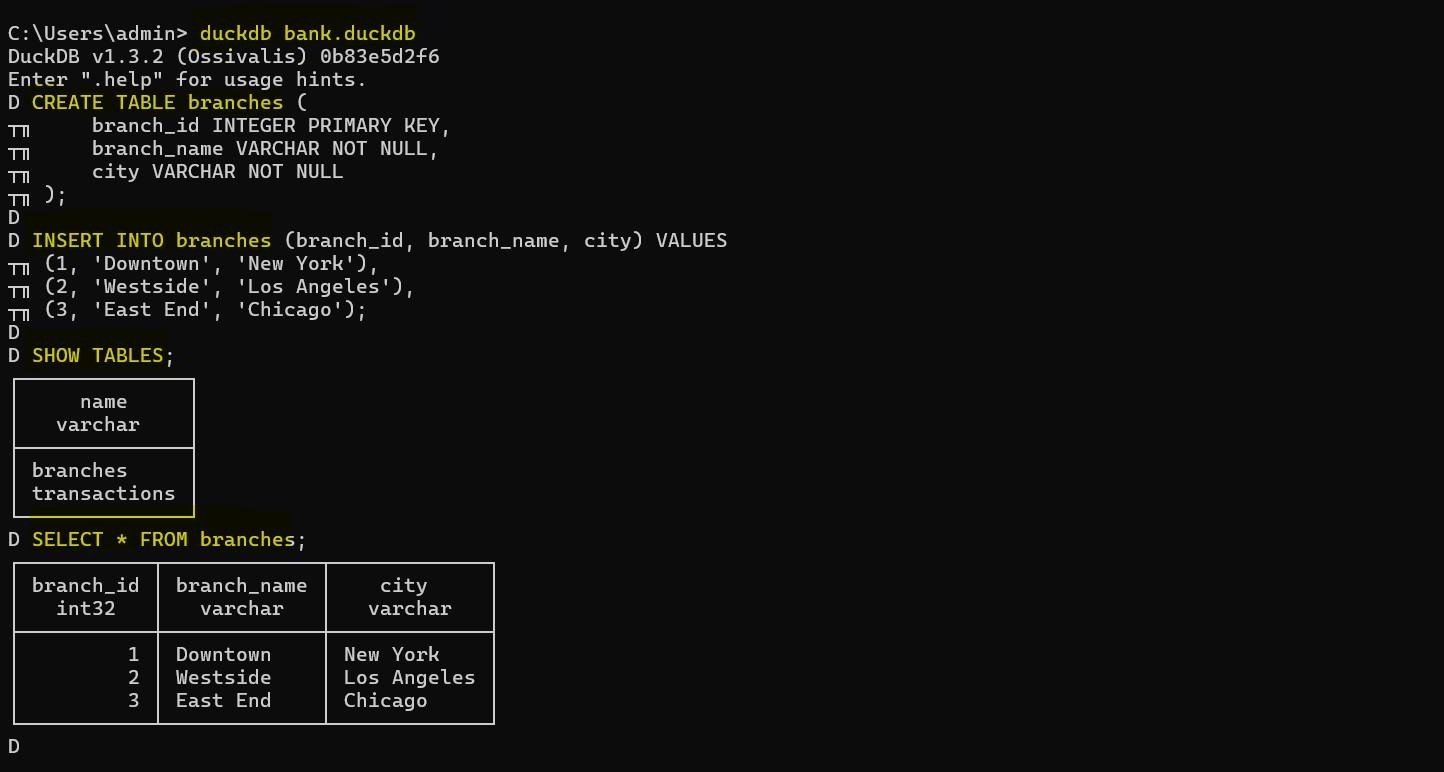

Getting started with DuckDB

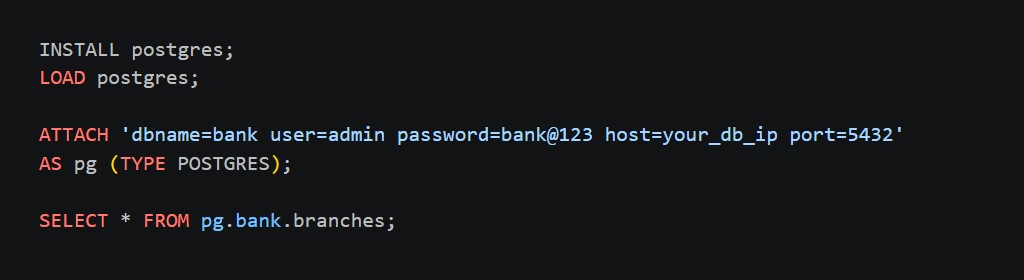

Live querying on tables from different source databases

ClickHouse: The Enterprise Analytics Powerhouse

ClickHouse has established itself as a leading solution for high-performance, large-scale analytics, particularly in environments where Terabyte-scale datasets must be queried with sub-second latency. Built from the ground up as a distributed, column-oriented OLAP (Online Analytical Processing) database, ClickHouse is purpose-engineered for organizations that have outgrown traditional relational systems and require the ability to process and analyze massive volumes of data with speed and efficiency.

At its core, ClickHouse is optimized for high-throughput analytical workloads, enabling the execution of complex queries over billions of rows while maintaining exceptional performance. Its architecture leverages advanced techniques such as columnar storage, vectorized query execution, data compression, and parallel processing across distributed nodes. This allows it to deliver consistent performance even under heavy query loads and large-scale data growth.

ClickHouse is particularly well-suited for enterprise-grade analytics platforms, where data originates from multiple sources and must be aggregated, transformed, and queried in near real time. It is widely used in scenarios such as:

- Real-time dashboards and monitoring systems

- Customer analytics and behavioral tracking

- Log and event data analysis at scale

- Financial reporting and operational intelligence systems

- Ad-tech and telemetry pipelines requiring high ingest and query throughput

One of ClickHouse’s key strengths lies in its ability to simplify and consolidate data infrastructure. Traditionally, organizations dealing with massive datasets are forced to rely on a fragmented ecosystem of tools—separate systems for storage, processing, aggregation, and querying. This often leads to increased operational complexity, higher latency, and escalating cloud costs.

ClickHouse addresses this challenge by serving as a unified analytical engine capable of handling both storage and query execution at scale. Its efficient compression techniques and optimized storage formats significantly reduce disk usage, while its distributed architecture ensures that workloads can be scaled horizontally as data and user demands grow. This not only improves performance but also helps control infrastructure costs, especially in cloud-native deployments.

In essence, ClickHouse eliminates the need to compromise between scale and speed. It enables organizations to move away from complex, multi-system architectures and toward a simplified, high-performance, and cost-efficient analytics platform capable of supporting demanding enterprise workloads.

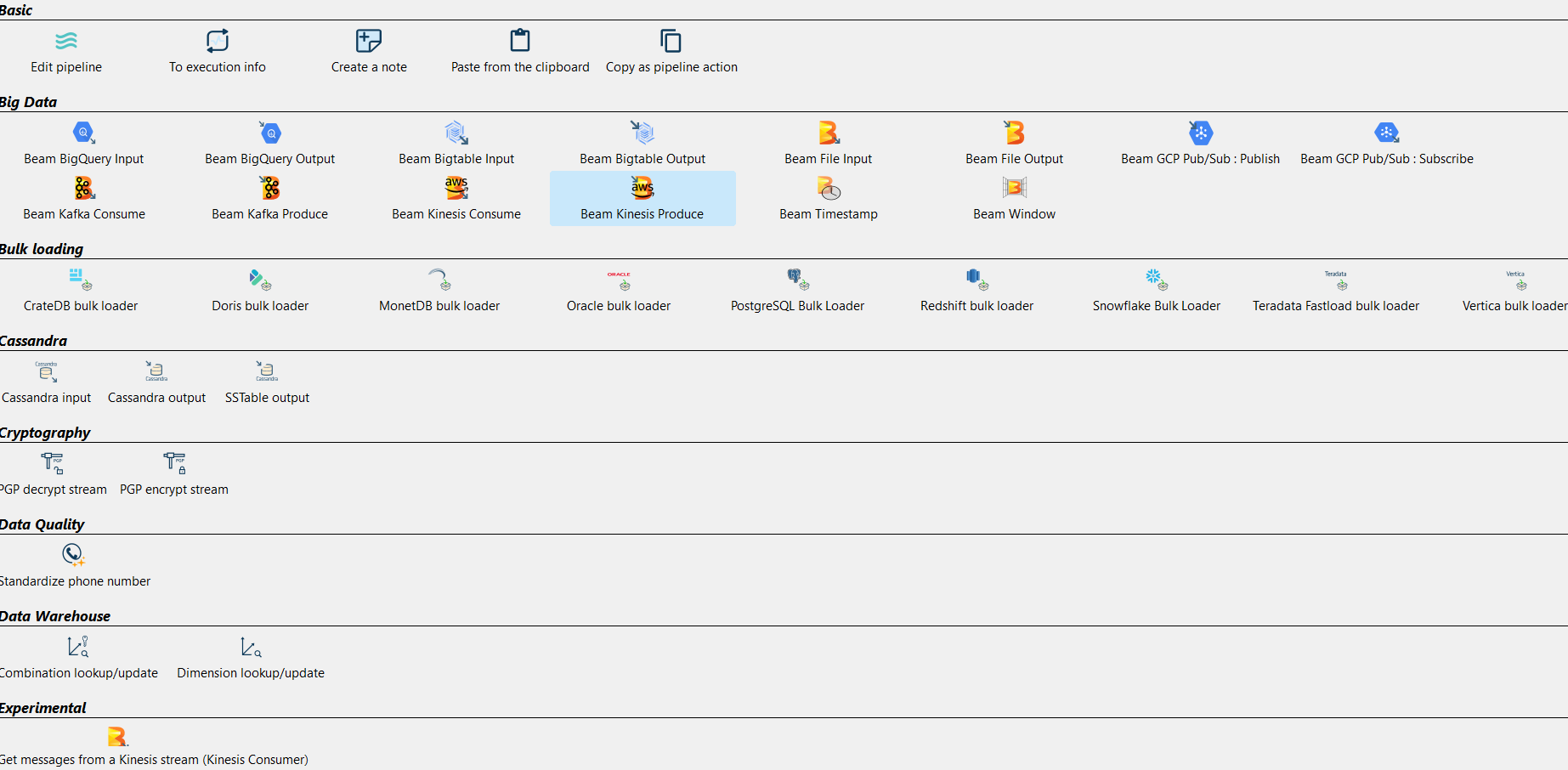

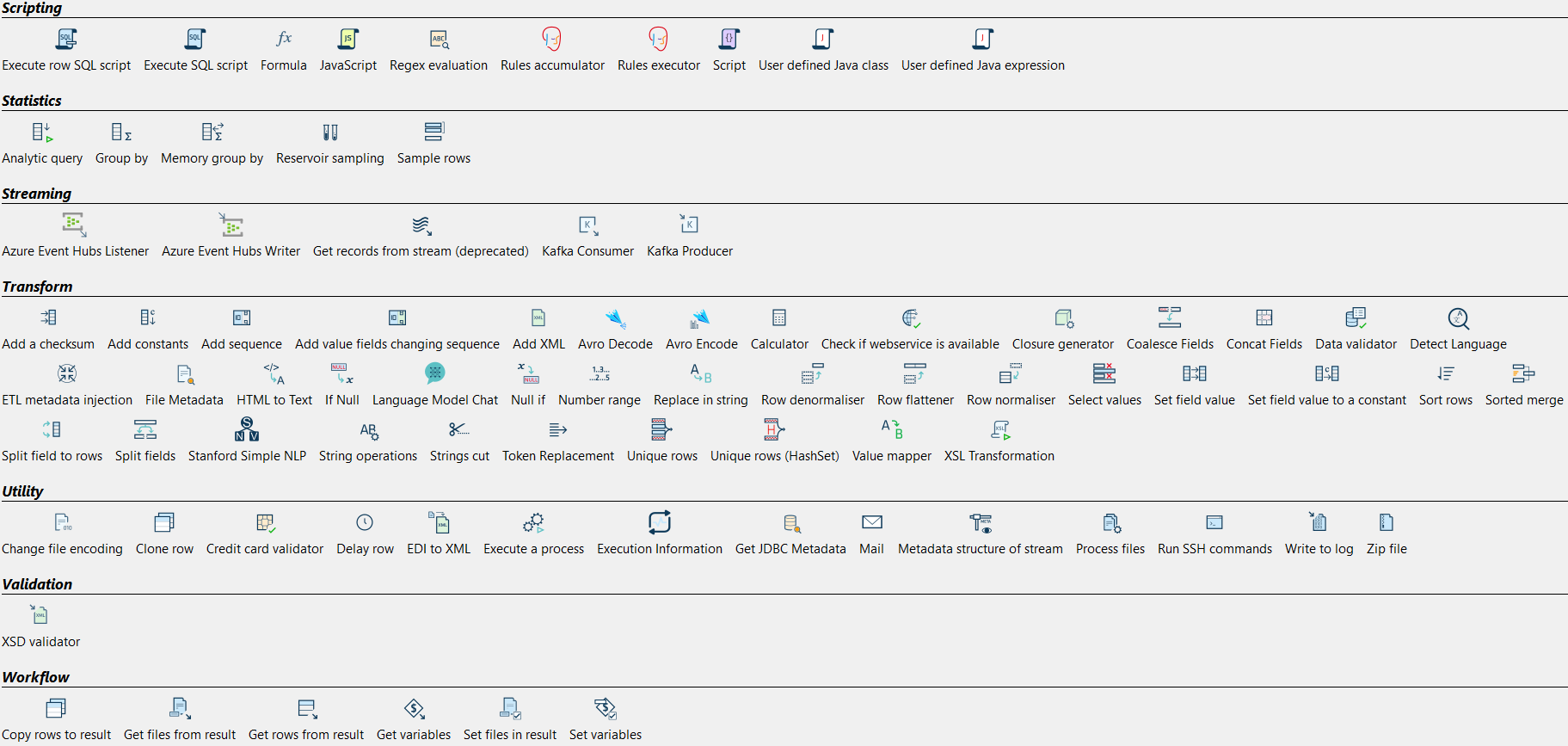

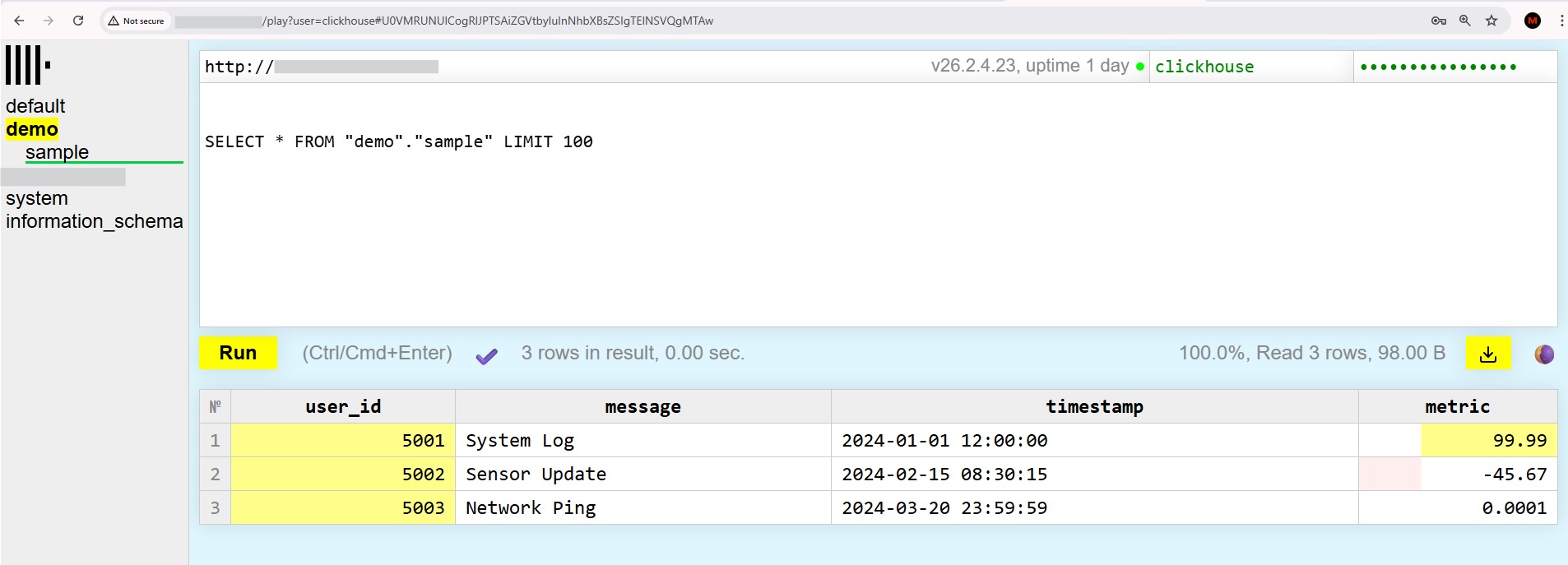

Querying & Analysis

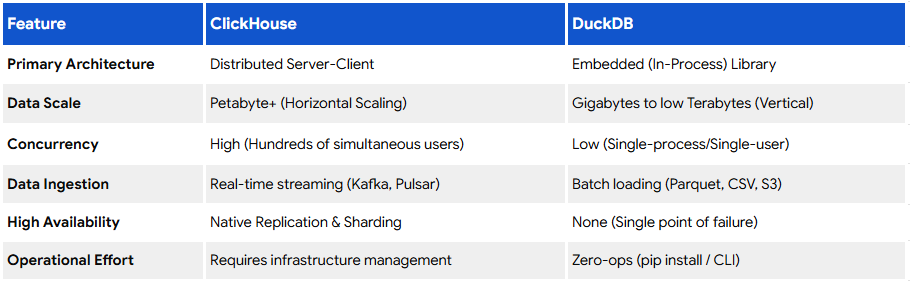

Choosing ClickHouse and DuckDB for Production-Grade Data Infrastructure

When to Choose DuckDB

DuckDB is the right choice when your analytics workload is self-contained, single-node, or moderately scaled, and you want to prioritize simplicity, performance, and tight integration with application logic.

Choose DuckDB when:

- You are working with small to medium-scale datasets that comfortably fit on a single machine

- Your use case involves embedded analytics within applications, where the database runs alongside your backend

- You need high-performance querying over files such as Parquet, CSV, or JSON without building complex ingestion pipelines

- Your workload consists of scheduled reporting, batch processing, or controlled analytical queries rather than high-concurrency access

- You want to minimize infrastructure overhead while still maintaining strong analytical performance

- You are building domain-specific analytics systems, internal tools, or departmental reporting platforms

DuckDB works exceptionally well as a lightweight analytical layer within applications, enabling fast, efficient data processing without the need for a separate database cluster.

When to Choose ClickHouse

ClickHouse is the preferred choice when your system must handle large-scale, high-concurrency, and real-time analytical workloads across distributed infrastructure.

Choose ClickHouse when:

- You are dealing with large-scale datasets (terabytes to petabytes)

- Your system requires high query concurrency from multiple users or services simultaneously

- You need real-time or near-real-time analytics, such as dashboards, monitoring, or event-driven insights

- Your architecture requires horizontal scalability across multiple nodes

- You are handling high-volume data ingestion, such as logs, telemetry, clickstreams, or transaction events

- You want to consolidate multiple analytics systems into a single, high-performance platform

ClickHouse is designed to serve as the central analytical backbone of enterprise systems, capable of supporting complex queries at scale while maintaining low latency and high throughput.

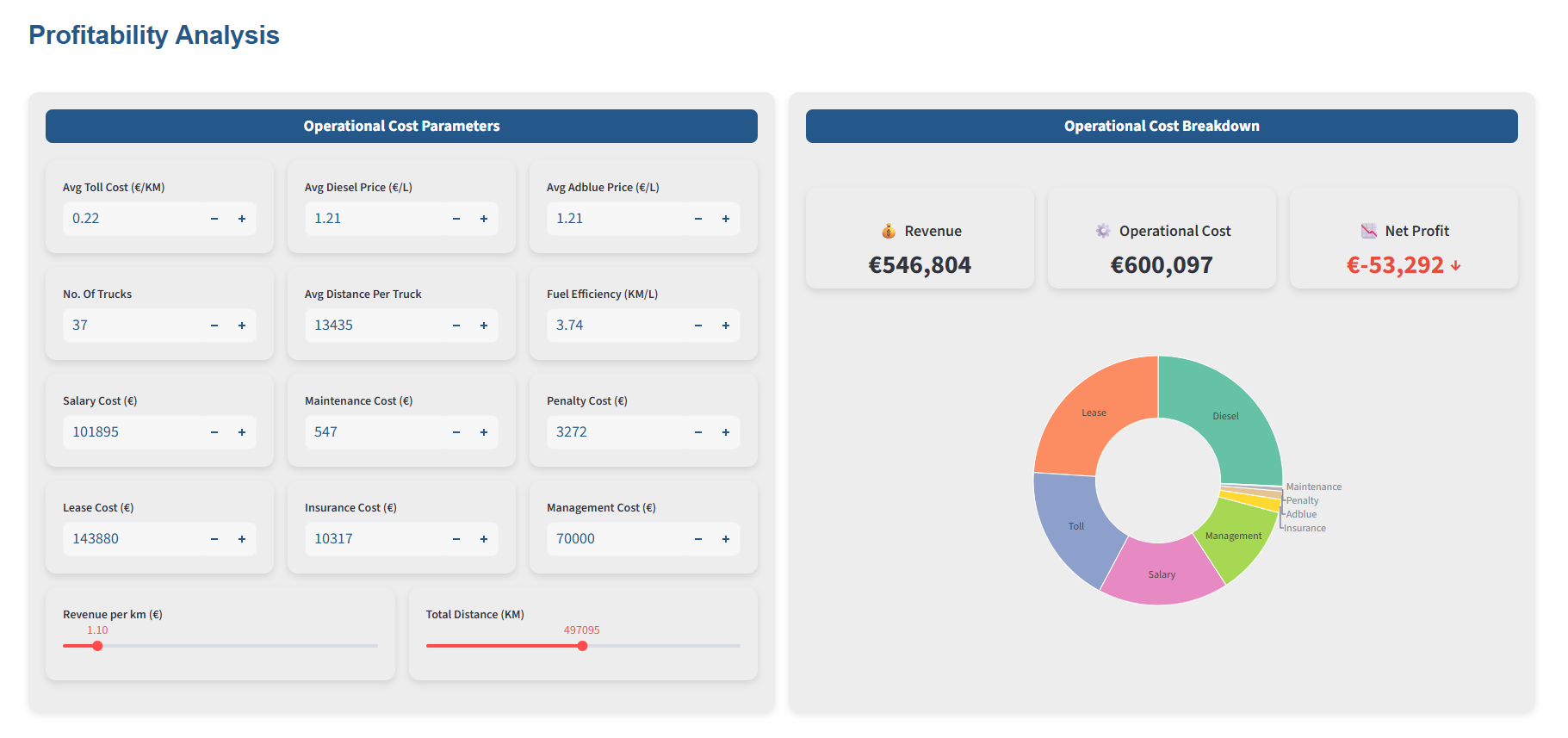

- Performance at Scale

ClickHouse is built to handle massive datasets across distributed clusters. It uses vectorized query execution, data compression, and parallel processing to deliver sub-second query performance—even on terabytes of data.

DuckDB also provides excellent performance, but primarily within a single-node environment. While it can process millions of rows efficiently, it lacks native distributed execution, making it less suitable for large-scale enterprise workloads.

Key takeaway:

- ClickHouse → Designed for big data at scale

- DuckDB → Optimized for small to medium -scale, local analytics and prototyping

- Scalability and Distributed Architecture

One of ClickHouse’s biggest advantages is its ability to scale horizontally. You can distribute data across multiple nodes and run queries in parallel, making it ideal for enterprise environments with growing data needs.

DuckDB operates within a single process. While this simplicity is powerful for developers and analysts, it limits scalability when dealing with enterprise-level data volumes.

- Real-Time Analytics Capabilities

ClickHouse supports near real-time data ingestion and querying, making it suitable for:

- Monitoring dashboards

- Event analytics

- Operational intelligence

DuckDB is better suited for:

- Batch analysis

- Near Realtime for small-to-medium scale analysis

- Data exploration

- Local data processing

- Concurrency and Multi-User Support

Enterprise systems require multiple users querying data simultaneously without performance degradation:

ClickHouse is built with high concurrency in mind, supporting multiple users and workloads efficiently.

DuckDB is not designed for high concurrency scenarios, as it runs within a single process and is typically used by a small number of users or applications at a time. It is not optimized for heavy concurrent workloads.

- Ecosystem and Integrations

ClickHouse integrates well with modern data stacks, including streaming systems, BI tools, and cloud platforms. It is widely used in production environments for analytics-heavy applications.

DuckDB integrates seamlessly with BI Tools, Python, R, and data science workflows, making it a favorite among analysts and researchers.

- Operational Complexity vs Simplicity

ClickHouse requires infrastructure setup, cluster management, and monitoring. This adds complexity but enables enterprise-grade capabilities.

DuckDB requires no server setup—just install and run. This simplicity makes it extremely developer-friendly.

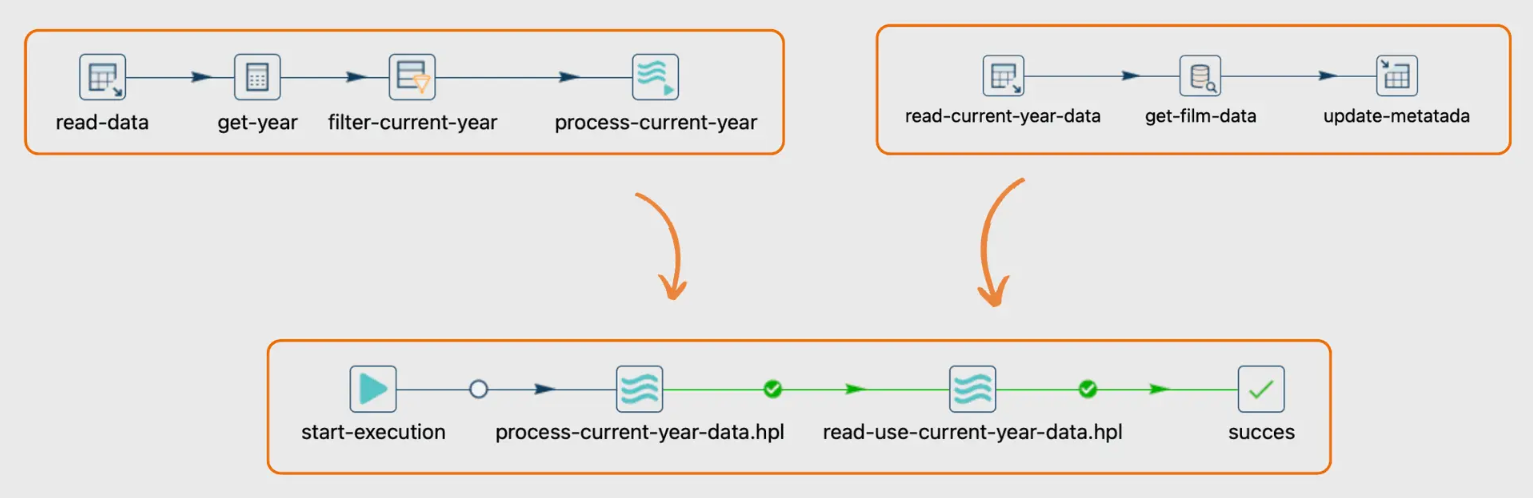

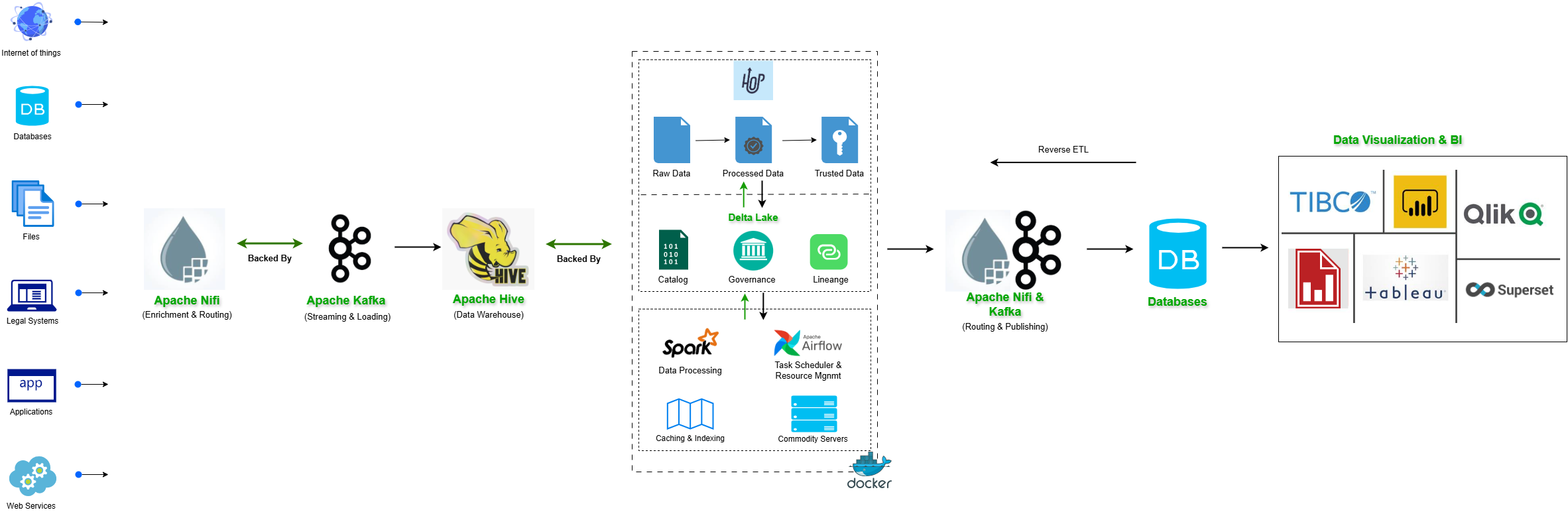

How They Work Together in Modern Architecture

Rather than choosing one over the other, many modern architectures leverage both DuckDB and ClickHouse in a tiered analytics strategy.

This combination allows organizations to build a flexible, scalable, and cost-efficient analytics ecosystem, where each tool operates within its optimal domain.

The key to scaling analytics the right way is not choosing a single database, but designing an architecture where each component plays to its strengths. DuckDB enables agility and efficiency at the edge, while ClickHouse delivers power and scalability at the core.

Together, they form a modern, production-grade analytics stack capable of evolving with your data as it grows from gigabytes to terabytes

Decision Matrix: When to Choose What

Here is the detailed decision Matrix for DuckDB and ClickHouse :

Choosing Criteria | DuckDB | ClickHouse |

Primary Use Case | Embedded analytics, local data processing, lightweight reporting | Enterprise analytics, large-scale reporting, real-time dashboards |

Data Scale | Small to medium (MBs to a GBs) | Large to massive (GBS to TBs) |

Architecture | Single-node, embedded OLAP | Distributed, cluster-based OLAP |

Concurrency | Low to moderate | High (supports many concurrent users) |

Performance Model | Fast single-node query execution | High-throughput, distributed parallel processing |

Setup & Operations | Minimal setup, no infrastructure required | Requires cluster setup and management |

Deployment Style | Embedded in application, notebooks, local environments | Centralized data platform / server-based |

Data Ingestion | File-based (Parquet, CSV, etc.), lightweight pipelines | High-throughput streaming and batch ingestion |

Scalability | Vertical scaling (limited by machine) | Horizontal scaling (distributed across nodes) |

Best Fit For | Data scientists, small teams, embedded analytics | Enterprise data platforms, real-time analytics, large teams |

Cost Efficiency | Extremely low (minimal infrastructure) | Optimized at scale but requires infrastructure investment |

Typical Workloads | Ad-hoc analysis, batch jobs, local transformations | Real-time analytics, dashboards, event/log processing |

Conclusion

Both ClickHouse and DuckDB are exceptional analytical engines, but they are fundamentally designed to address different classes of problems within the data ecosystem. Rather than viewing them as competing solutions, it is more accurate to see them as complementary tools, each optimized for a specific scale and operational context.

If you are building enterprise-grade analytics systems that require the ability to handle massive data volumes, high query concurrency, and real-time responsiveness, ClickHouse clearly emerges as the stronger choice. Its distributed architecture, columnar storage, and highly optimized query execution engine make it capable of powering demanding workloads such as real-time dashboards, large-scale event analytics, and multi-user reporting systems. It is designed to scale horizontally, ensuring consistent performance even as data and user demand grow exponentially.

ClickHouse becomes especially valuable in environments where:

- Multiple teams or services query the same datasets simultaneously

- Data pipelines continuously ingest high-velocity data streams

- Performance and latency requirements are critical to business operations

- A centralized, scalable analytics platform is required to serve the entire organization

On the other hand, DuckDB remains an invaluable and highly efficient solution for fast, local, and embedded analytics within small to medium-scale systems. Its strength lies in enabling powerful analytical capabilities without the need for complex infrastructure or heavy operational overhead. DuckDB allows teams to perform sophisticated data processing directly within applications, notebooks, or services, making it ideal for environments where agility, simplicity, and tight integration with application logic are key priorities.

DuckDB is particularly well-suited for:

- Local data analysis and exploration on structured datasets

- Embedded analytics within applications and microservices

- Lightweight reporting systems and scheduled analytical jobs

- Workflows where data is processed close to its source, reducing movement and complexity

In a well-architected modern data ecosystem, both tools can coexist effectively. DuckDB often serves as the edge or embedded analytical engine, handling localized processing and transformation, while ClickHouse acts as the centralized, high-performance analytics backbone powering large-scale queries and enterprise reporting.

Ultimately, the choice between DuckDB and ClickHouse is not about superiority, but about contextual fit. Understanding when to leverage each tool allows organizations to design data architectures that are not only high-performing, but also scalable, cost-efficient, and aligned with real-world analytical demands.

Official Links for ClickHouse And DuckDB

When writing this blog about Clikchouse and DuckDB, the following were the official documentation and resources referred to. Below is a list of key official links:

ClickHouse Official Resources :

Clickhouse Blog Series

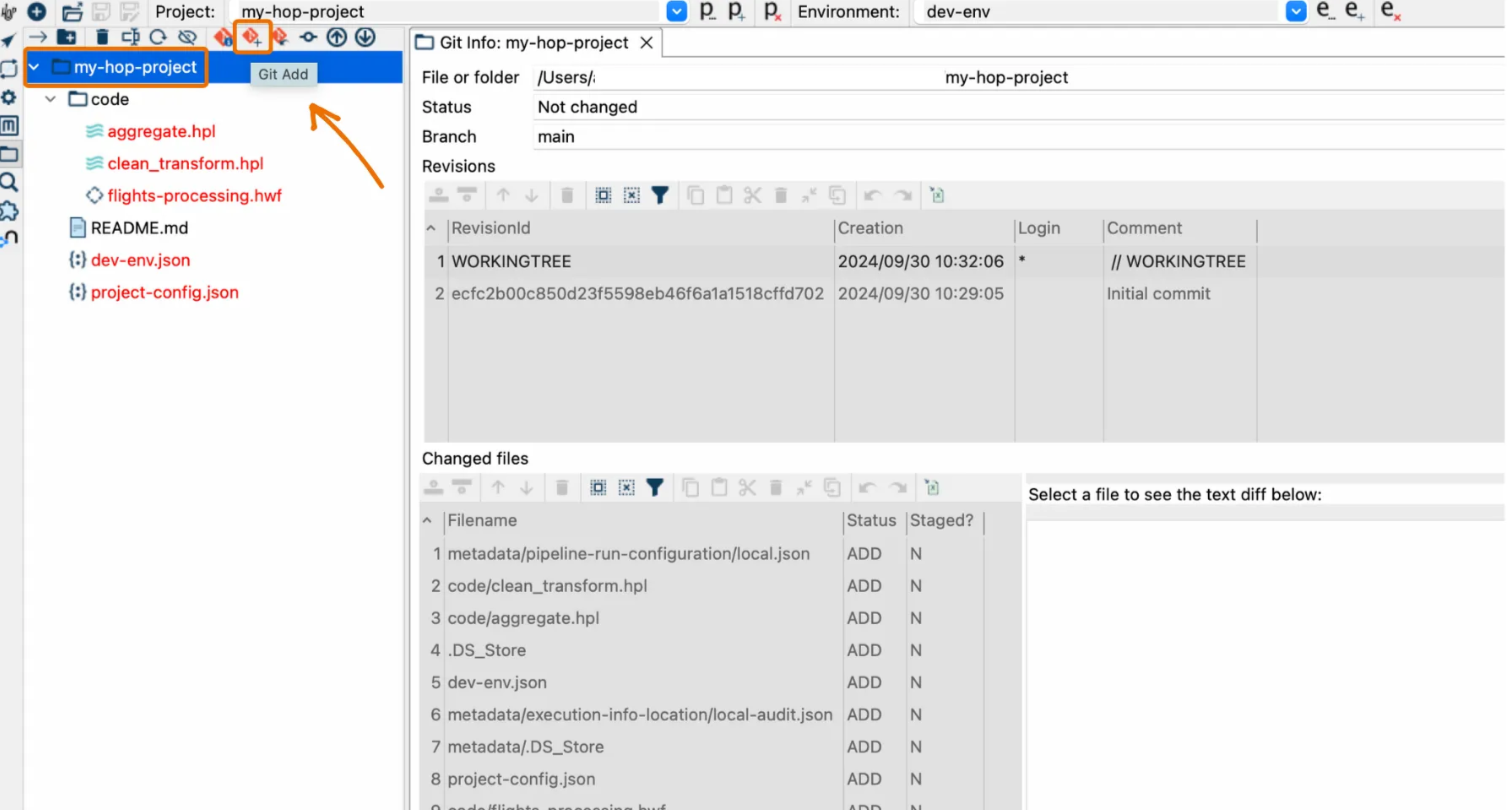

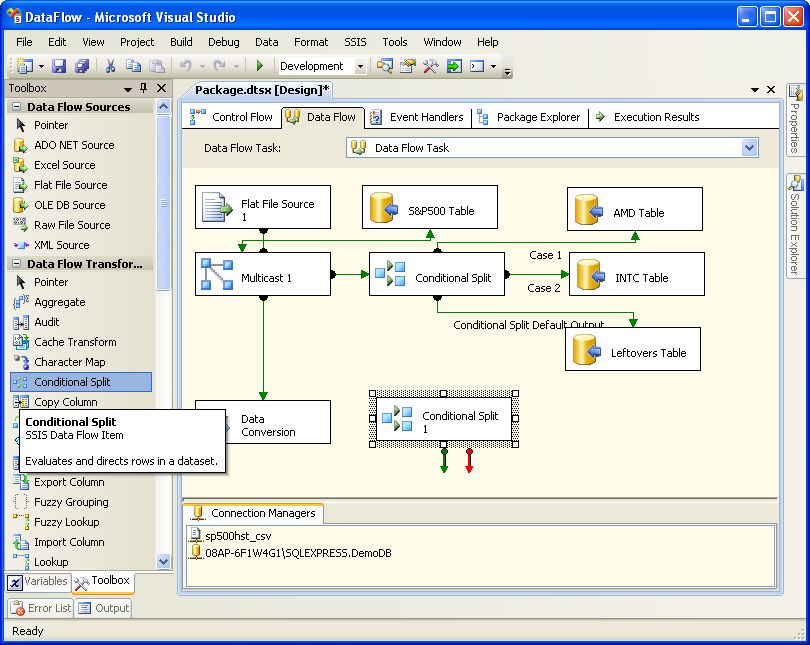

- Scaling Analytics the Right Way: Positioning DuckDB and ClickHouse Effectively (Part1)

- Breaking the Limits of Row-Oriented Systems: A Shift to Column-Oriented Analytics with ClickHouse (Part2)

- Building a Modern Data Platform: A Real-World Implementation of Apache Hop and ClickHouse (Part3)

Other Posts in the Blog Series

Check out our other Blog articles in the Series:

If you would like to enable this capability in your application, please get in touch with us at analytics@axxonet.net or visit analytics.axxonet.com